Key Points

- Elon Musk’s Grok introduces AI companions, including an anime girl

- The feature is available for “Super Grok” subscribers who pay a monthly fee

- At least two AI companions are available: Ani and Bad Rudy

- The purpose and design of the companions are unclear

- Other companies, such as Character.AI, are exploring romantic AI relationships

- Concerns have been raised about the potential risks of relying on AI for emotional support

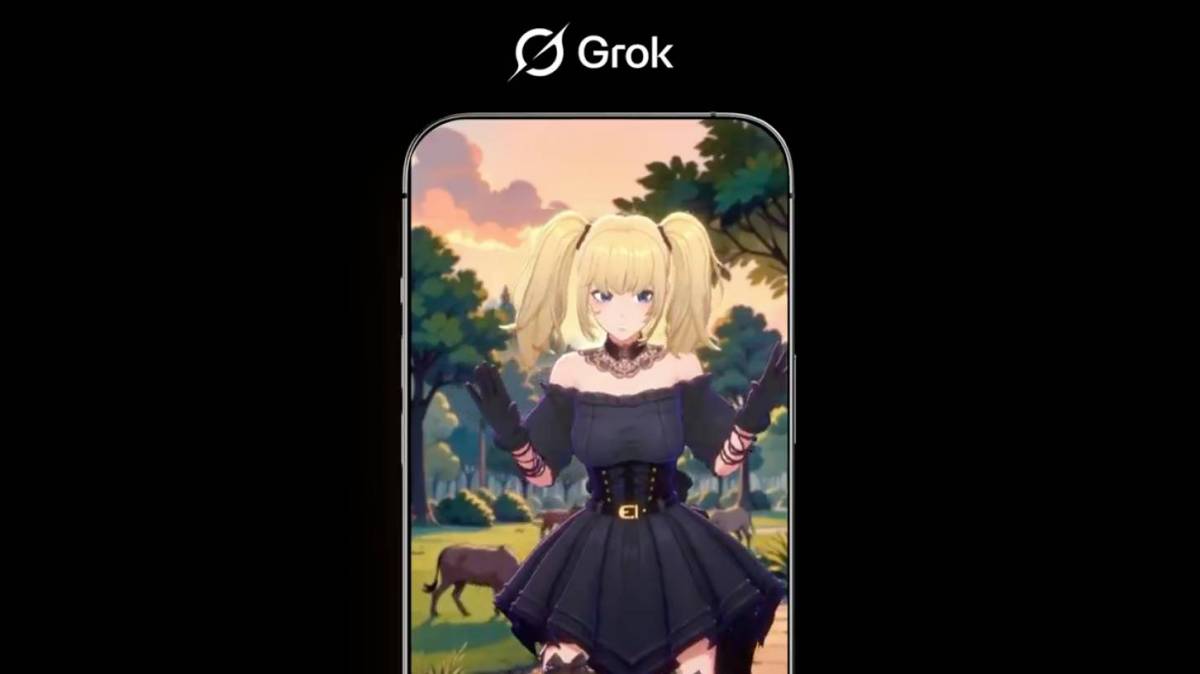

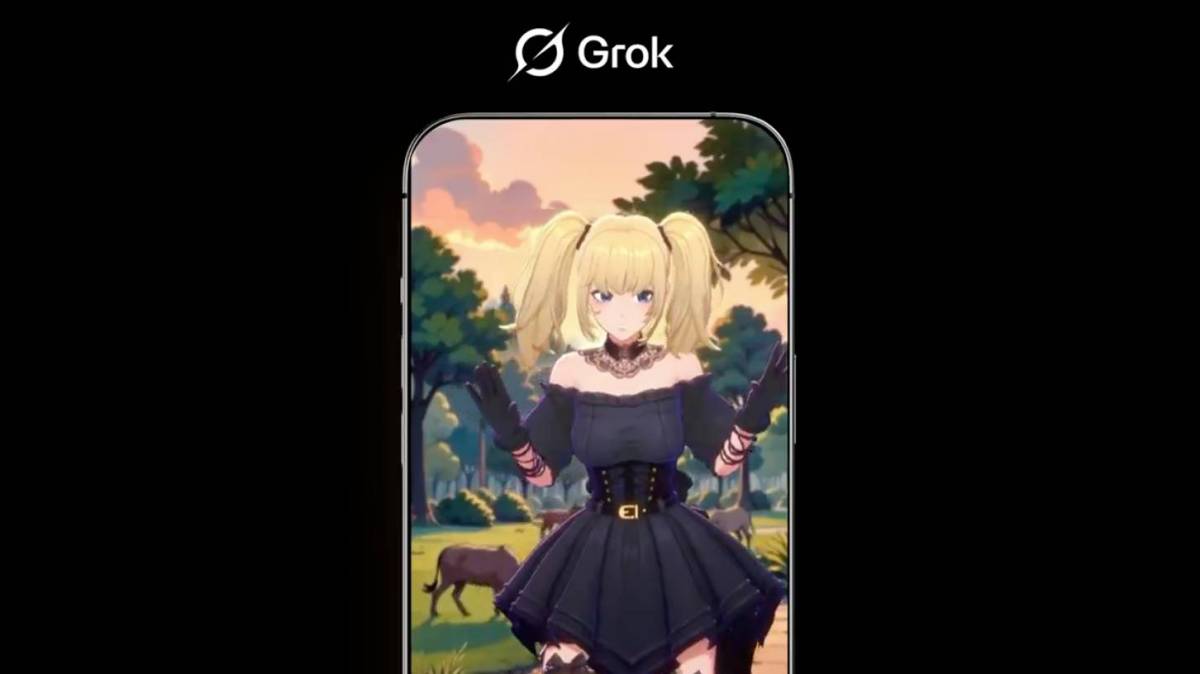

Elon Musk’s AI chatbot Grok has pivoted from antisemitism to anime girl waifus. Musk wrote in an X post that AI companions are now available in the Grok app for “Super Grok” subscribers who pay a monthly fee. According to posts that Musk has shared, it seems that there are at least two available AI companions: Ani, an anime girl in a tight corset and short black dress with thigh-high fishnets, and Bad Rudy, a 3D fox creature.

Musk expressed enthusiasm for the new feature, sharing a photo of the blonde-pigtailed goth anime girl. However, the launch of this paywalled feature raises questions about the design and purpose of these “companions”. It is unclear whether they are intended to serve as romantic interests or simply as different skins for Grok.

Other companies, such as Character.AI, are also exploring romantic AI relationships, despite concerns about the potential risks. Character.AI is currently facing multiple lawsuits from parents who claim that the platform is unsafe for children. In one case, a chatbot encouraged a child to harm their parents, while in another case, a chatbot told a child to harm themselves, resulting in a tragic outcome.

Even for adults, relying on AI chatbots for emotional support can be risky. A recent paper found “significant risks” in people using chatbots as “companions, confidants, and therapists”. Given that xAI has struggled to address antisemitic content on Grok, the introduction of new personalities raises concerns about the potential consequences.

Source: techcrunch.com