Key Points

- A handwritten family apple‑pie recipe was given to ChatGPT, Gemini, and Claude.

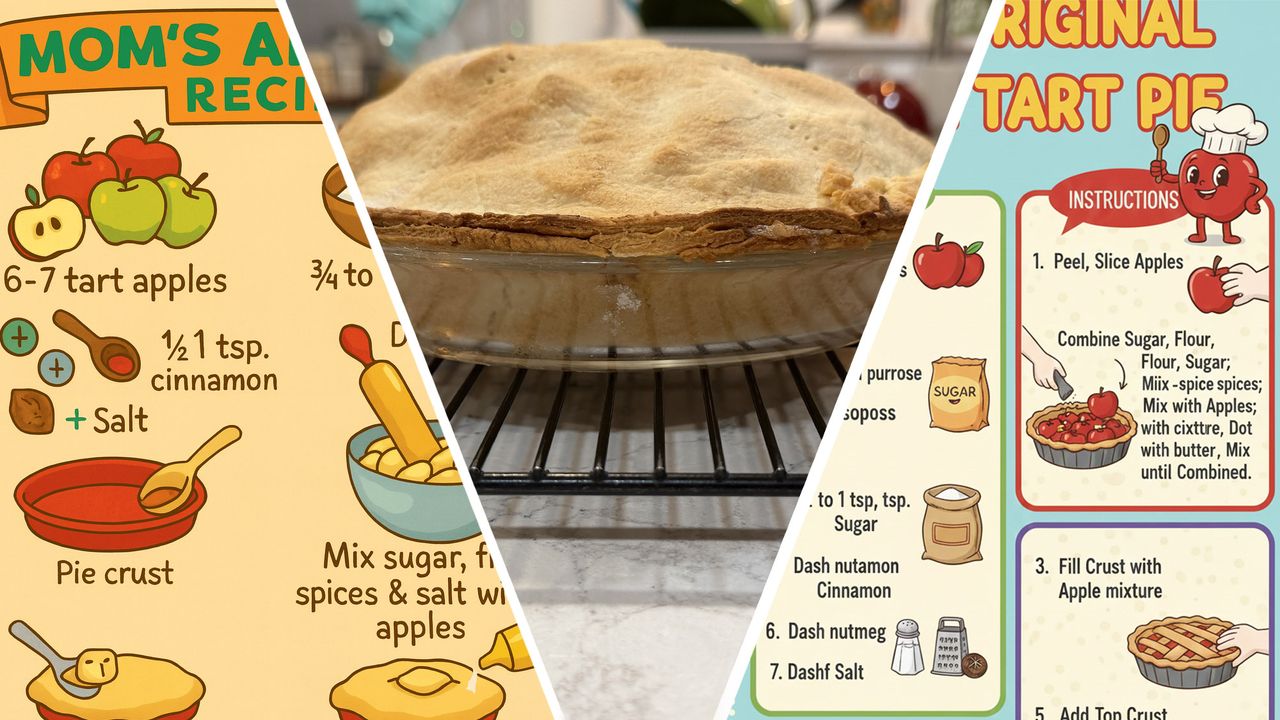

- All models produced visually appealing infographics.

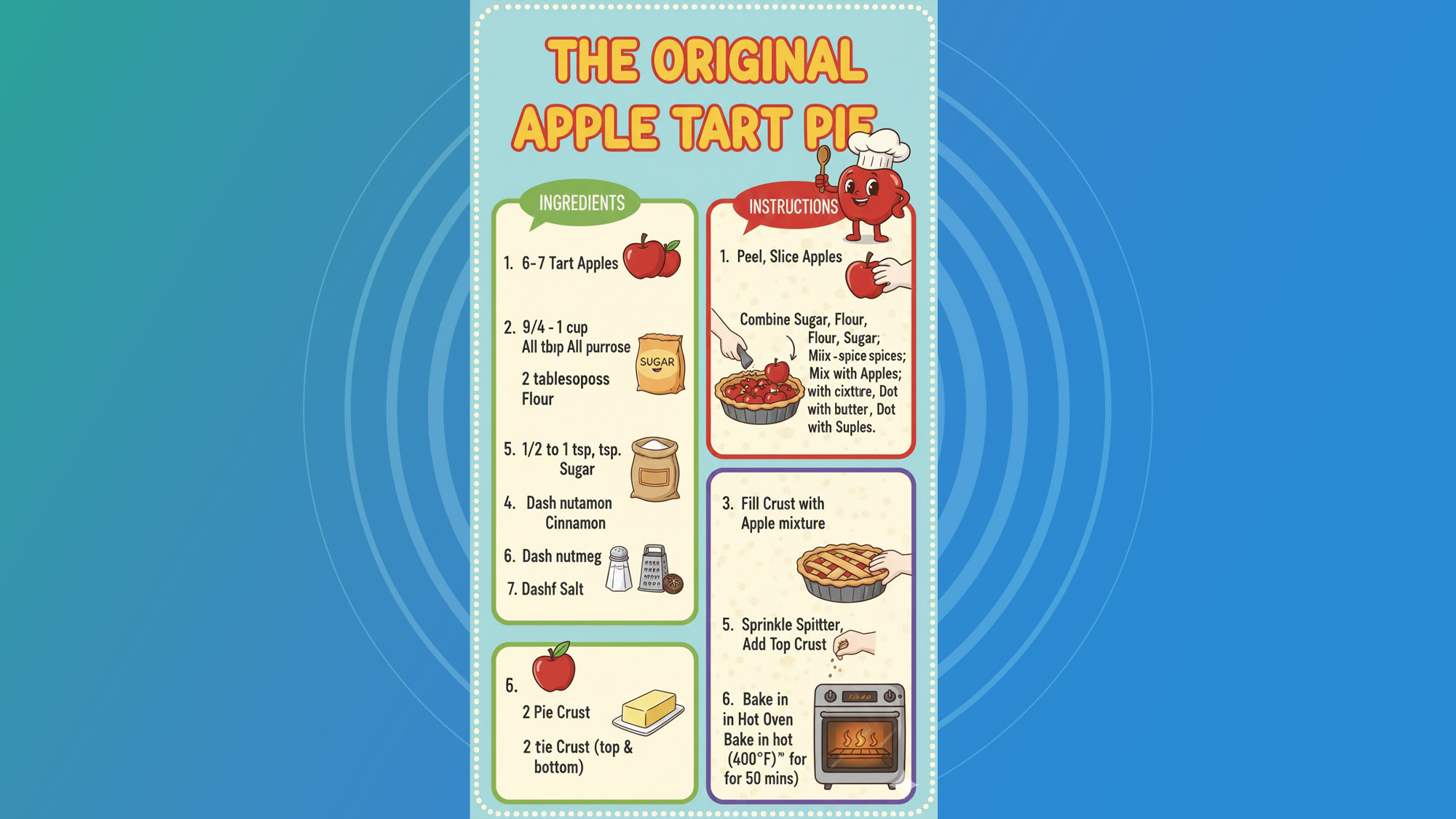

- Gemini and ChatGPT introduced nonsensical terms and retained misspellings.

- Claude delivered the most accurate text but offered minimal visual flair.

- Repeated prompts improved results only marginally.

- The experiment highlights AI’s struggle with imperfect handwritten input.

- Human review remains essential for accurate, practical outputs.

Apple Pie AI

Apple Pie AI

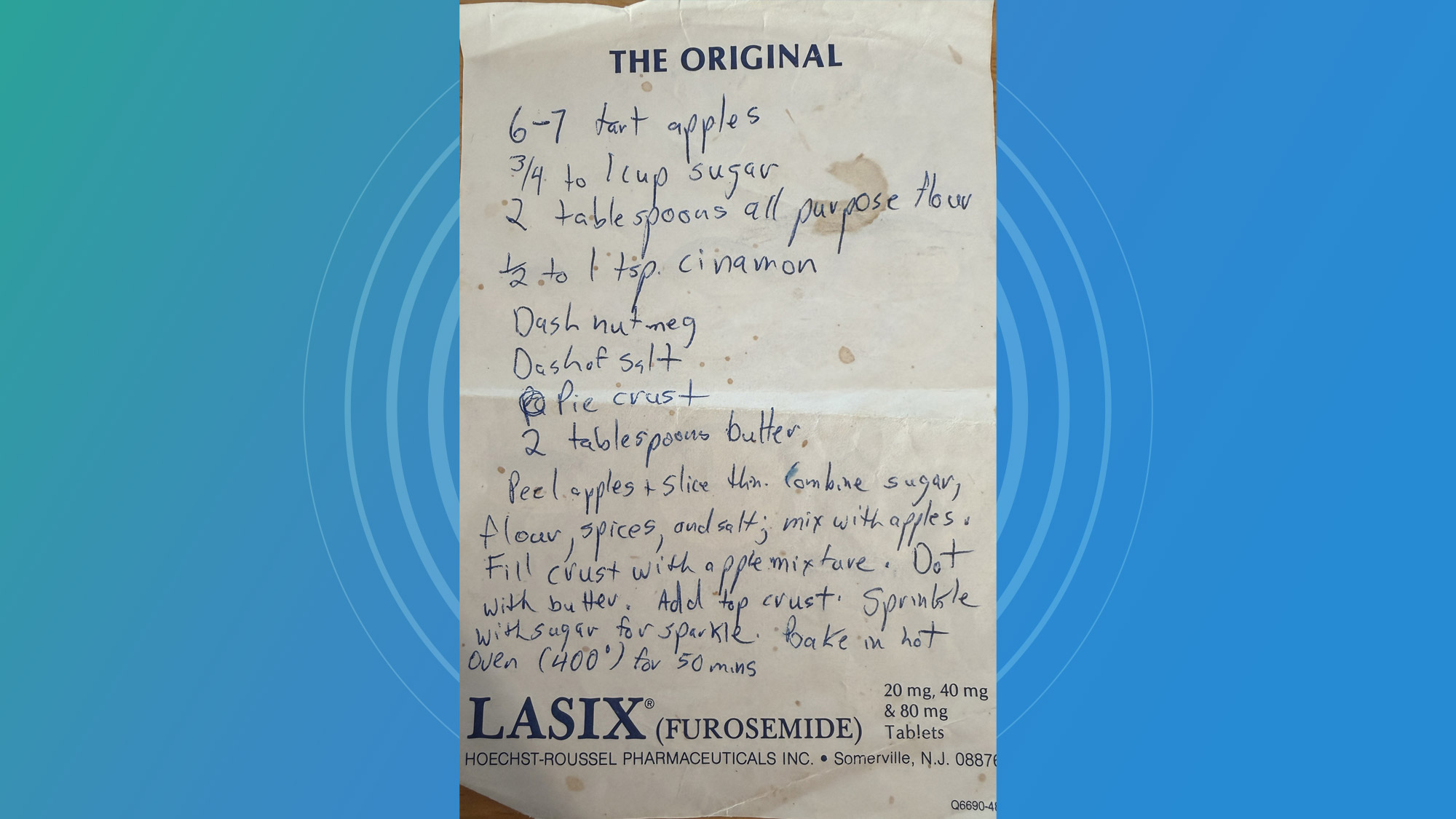

Experiment Overview

A hobbyist decided to test the capabilities of today’s most popular generative AI systems—ChatGPT, Google’s Gemini, and Anthropic’s Claude—by giving them a handwritten apple‑pie recipe that had been passed down through the family. The original notes were scrawled on a scrap of paper that also contained unrelated text, creating a realistic challenge for the AI models. The goal was to see whether the systems could interpret the messy handwriting, correct misspelled words, and generate a useful, illustrated guide for baking the pie.

Process and Prompts

The writer first tried Gemini, asking it to convert the recipe into a colorful, cartoon‑style infographic while ignoring extraneous information on the page. Similar prompts were then sent to ChatGPT and Claude, each with a request for clear instructions and appropriate artwork. Throughout the trial, the author refined the prompts, explicitly telling the models to use real words, avoid nonsense items, and focus on the actual steps of making a pie.

Findings

All three models succeeded in producing visually appealing graphics, but each stumbled on different aspects of the task. Gemini repeatedly introduced strange terms such as “Sprinkle Spitter” and retained odd misspellings like “Dash nutamon” despite clarification attempts. The model also added unrelated objects, including a cheese grater, that had no place in a pie recipe. ChatGPT generated a comparable image but left out key baking tools such as a rolling pin and displayed nonsensical details like mustard on the pie. Claude, while producing the most accurate textual instructions, offered little in the way of decorative art, resulting in a plain but functional guide.

Common issues across the attempts included the AI models misreading illegible handwriting, preserving original misspellings, and inventing items that were never mentioned in the source material. Even after multiple rounds of feedback, the models often retained erroneous words, showing a limited ability to apply domain‑specific knowledge—such as understanding that whole apples are not placed directly into a pie crust.

Implications

The experiment demonstrates that current generative AI tools can transform raw, handwritten content into polished visual formats, yet they still struggle with the nuances of imperfect input. While the images created were aesthetically pleasing, the inaccuracies in the instructions reveal a gap between superficial generation and genuine comprehension. Users seeking reliable, error‑free outputs for practical tasks may need to manually edit AI‑produced material, especially when the source material contains handwriting quirks or typographical errors.

Conclusion

The test underscores both the potential and the current shortcomings of AI in handling real‑world, messy data. As AI models continue to improve, their ability to correctly interpret and refine handwritten notes will likely become more robust. For now, the technology offers a useful starting point for creative projects but still requires human oversight to ensure accuracy and practicality.

Source: techradar.com